A zero-boundary database architecture.

From wire to GPU on Apple Silicon.

Treating data flows as a compute primitive.

Two technologies. One architecture.

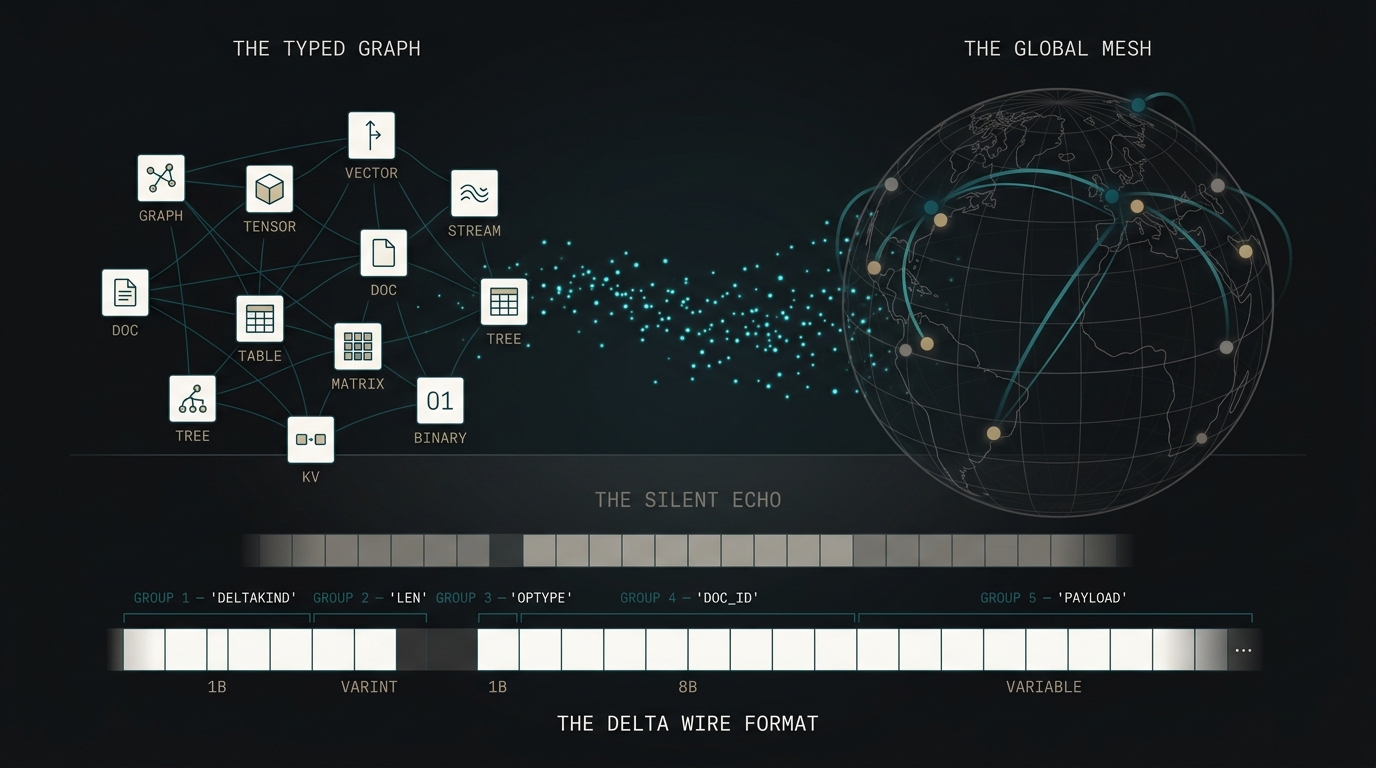

A data engine for complex data structures — trees, graphs, streams, tensors, tables, vectors.

Structure-aware. Zero-copy from network to compute. mgraph is data collaboration as a primitive.

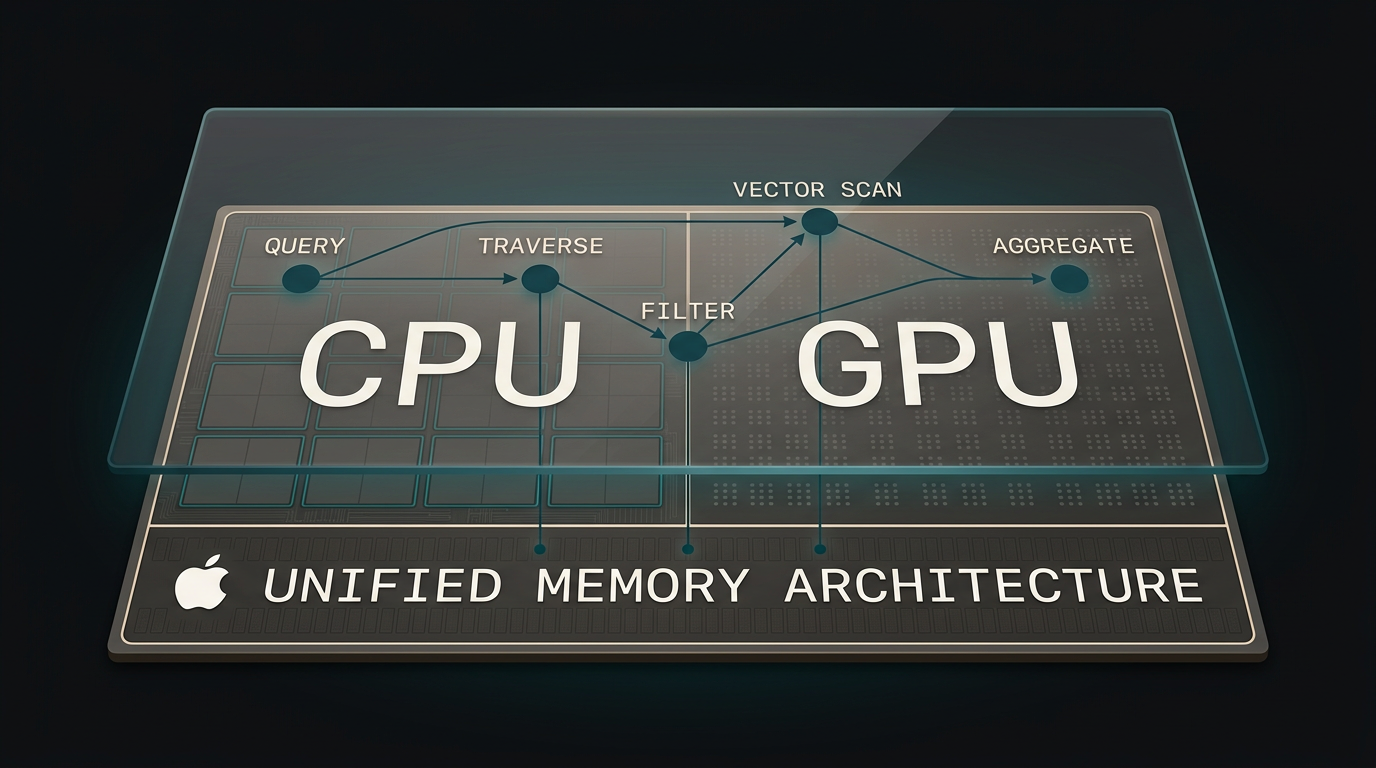

Vector and relational database built for Apple Silicon’s unified memory.

Treats the GPU as primary compute. CPU and GPU on shared memory for a previously unseen class of operations.

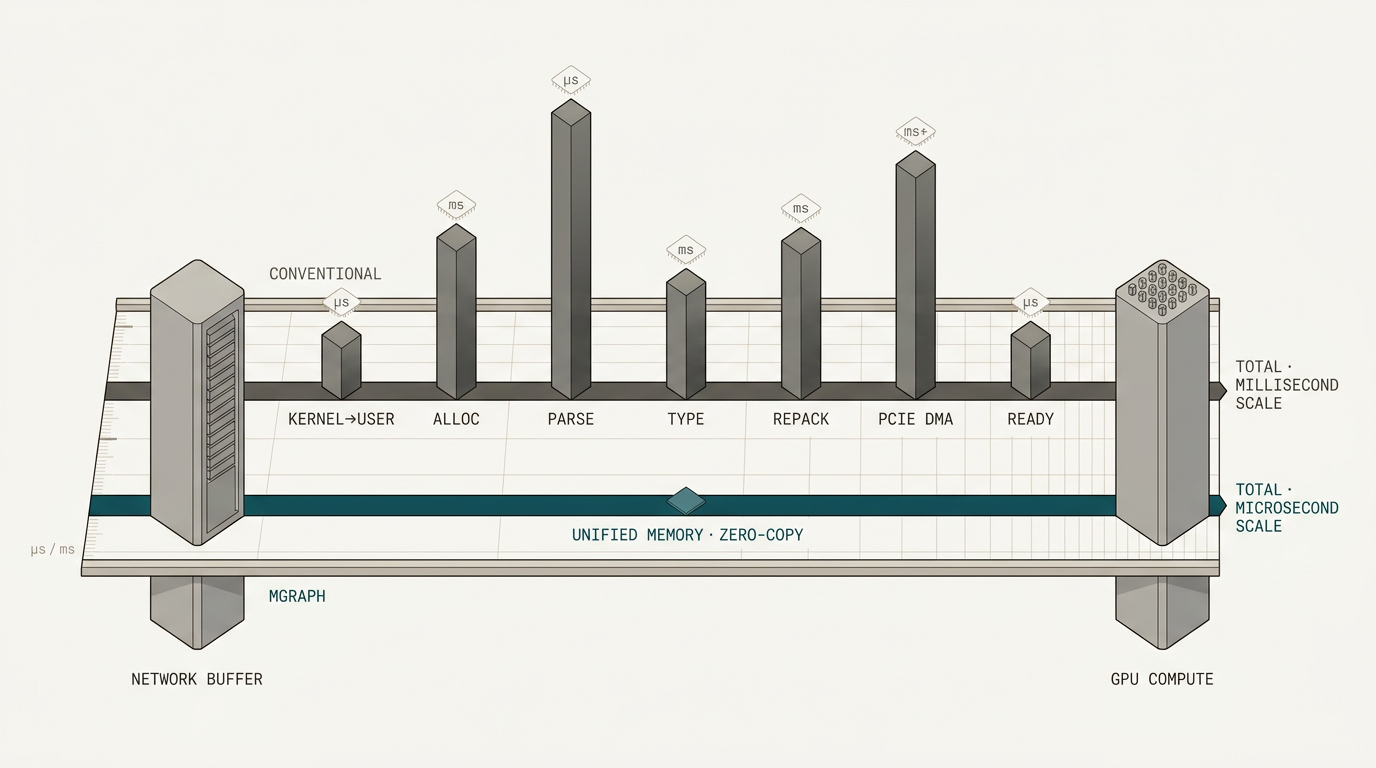

A direct line from wire to GPU.

Together, they provide a direct line from wire to GPU compute that was previously impossible.

Both are industry firsts. A structure-aware zero-copy data engine, and a database that treats the Apple Silicon GPU as primary compute. No existing system provides this path.

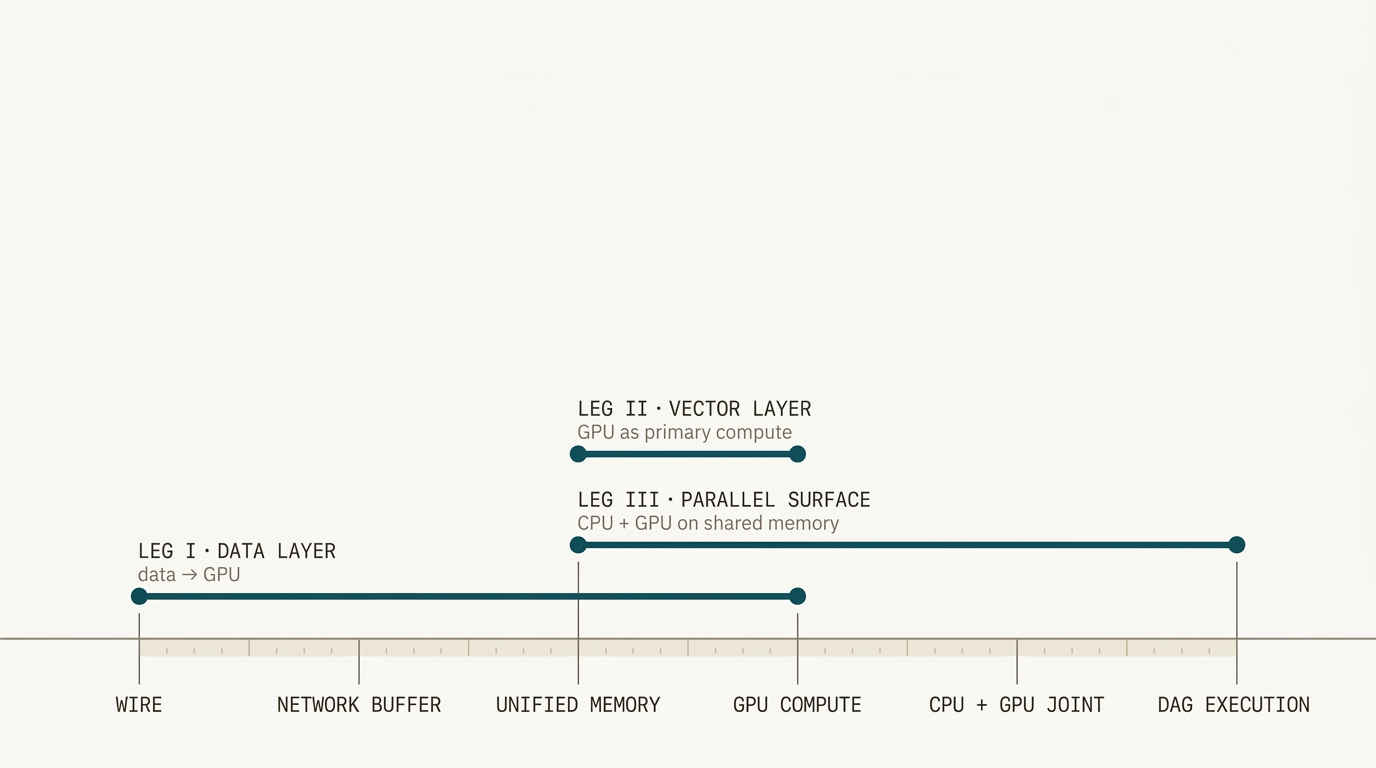

Measured in three parts.

Each leg benches the architectural claim it rests on.

Zero serialisation from network to compute.

Structured payloads moved without translation at the boundary.

The first database built for Apple Silicon’s GPU.

On-device vector retrieval on hardware every developer already owns.

Coordinated CPU+GPU execution on shared memory.

A property unique to Apple’s unified memory architecture.

The data leg.

Structure-aware, zero-copy delta propagation at message-broker throughput — with database-grade atomic guarantees across complex types.

Broker throughput.

Structured data.

Atomic Ops.

Delta propagation at Redis speeds, with database-like atomic commits on complex data types. No production system combines all of these today.

Fast or structured. Today you pick one.

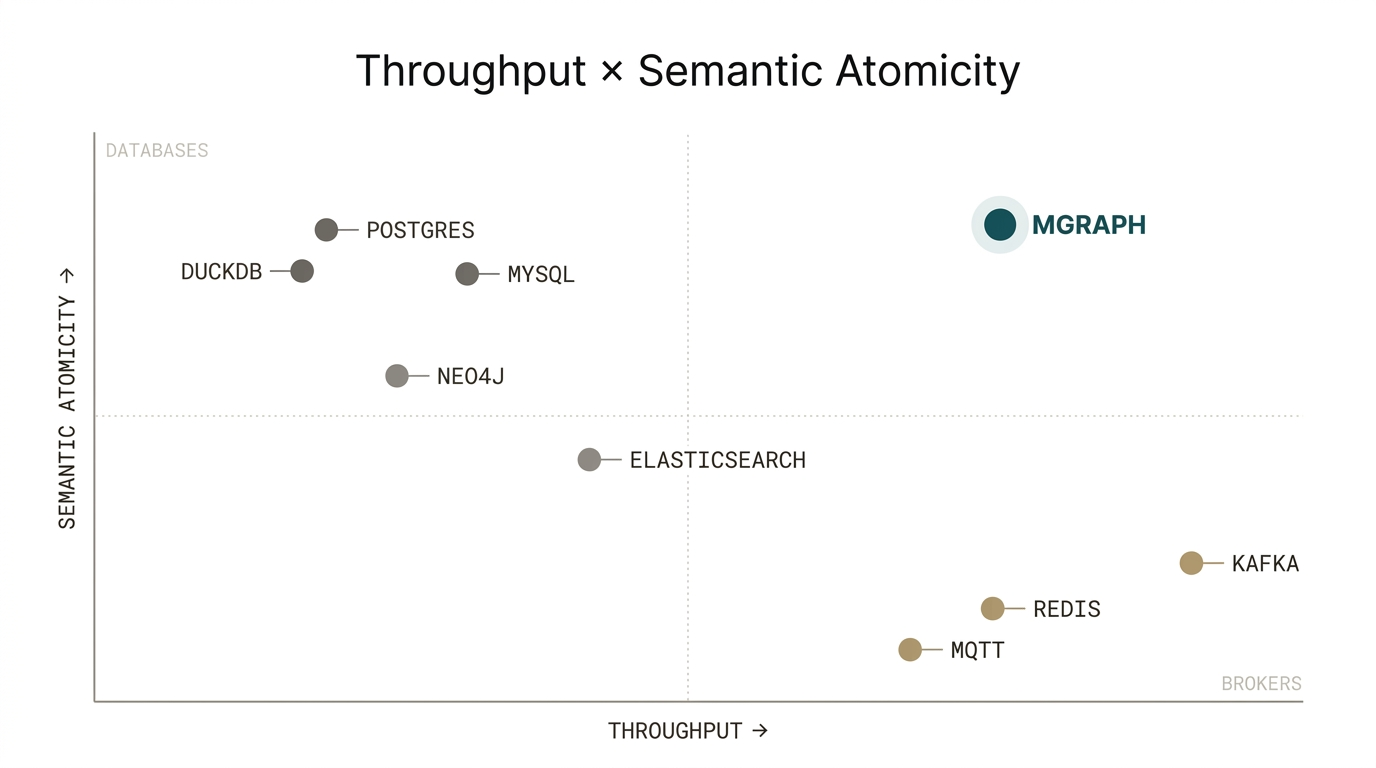

Throughput versus semantic atomicity across today’s systems.

Brokers give up structure for speed. Databases pay for structure in latency. The upper-right corner is empty — that’s what we bench against.

Throughput against brokers. Atomics against databases.

| Bench | Against | Measured |

|---|---|---|

| Throughput | Redis · Kafka | Ops per second. Latency percentiles. Payload scaling. |

| Atomics | Postgres · DuckDB | Table insert, vector update, stream append — single commit. Baselines must serialise structured payloads into JSON or BLOB columns; mgraph operates on them natively. |

The GPU layer.

The first vector database to leverage Apple Silicon’s GPU for retrieval — on-device, zero-dependency, on hardware every developer already owns.

On-device vector retrieval on the Apple Silicon GPU.

Every Mac, iPad, and iPhone ships with a capable GPU. Today’s vector databases either leave it idle or route to a cloud service. msearch puts the full GPU in the developer’s hands.

Latency, throughput, and energy per query.

| Bench | Against | Measured |

|---|---|---|

| Single-query latency | pgvector · Chroma · Qdrant | P50 / P99 latency. Recall at target accuracy. Cold-start behaviour. Identical Apple Silicon hardware. |

| Throughput at scale | pgvector · Chroma · Qdrant | QPS. Latency under load. Index-build throughput. Index sizes approaching unified memory capacity. |

| Energy per query | Milvus on NVIDIA | Joules per query at matched recall and latency. NVIDIA cloud-provisioned. |

The parallel query surface.

CPU and GPU, coordinated on shared memory. A class of database operations available only on Apple Silicon.

Each processor on the regime it’s best at. Shared memory between them.

GPUs are massively parallel. CPUs are optimally sequential. By coordinating their operations in shared memory, we deliver performance that no system outside Apple Silicon can match.

GPU against the CPU baseline.

Against Postgres and DuckDB across latency and throughput, at varying data sizes.

- 3-way / n-way joins Algebraic predicates - AND, OR, NOT.

- Graph traversal Multi-hop walks across structured graphs.

- Group-by, aggregation Parallel reductions over columns.

- Sort, top-k Reported as a crossover measurement — where GPU overtakes CPU by data size.

Each processor on its lane.

Shared state in UMA.

Five workloads where the handoff cost disappears because there is no handoff.

- Hash join GPU builds the hash table; CPU probes through collision chains. vs. Postgres · DuckDB

- Two-phase top-k GPU generates candidates; CPU runs filter. vs. pure-CPU · pure-GPU

- Nested loop + vector scan CPU iterates outer table; GPU scans inner. vs. Postgres with pgvector

- HNSW reindex + traversal GPU reindexes, CPU traverses — disjoint regions, shared memory. vs. pure-CPU · pure-GPU

- Streaming aggregation CPU manages window boundaries; GPU aggregates window. vs. pure-CPU

Four weeks.

One week per leg. One week to write.